Early December 2020, most Google services, from Gmail to YouTube to Google Drive, suffered a mass outage lasting over an hour. However, this is not the topic for this article. With a service of the scale and reach of Google, large failures can happen occasionally.

Google is one of the most productive technology organizations in the world. Their focus on continuous improvements to make systems reliable and better is admirable.

Google's automated testing and continuous integration practices demonstrate their engineering professionalism, and the results speak:

- Over four thousand small teams—simultaneously developing, integrating, testing, and deploying the code into production

- 40,000 code commits/day

- 50,000 builds/day

- 120,000 automated test suites

- 75 million test cases run daily

To replicate these outcomes, test automation leaders should create robust automated test suites that increase the frequency of integration and testing of code and environments from 'periodic' to 'continuous.'

You will accomplish this 'continuous' capability only when you can create the automated build, and test processes that ensure all the code checked in to version control is automatically built and tested in a production-like environment.

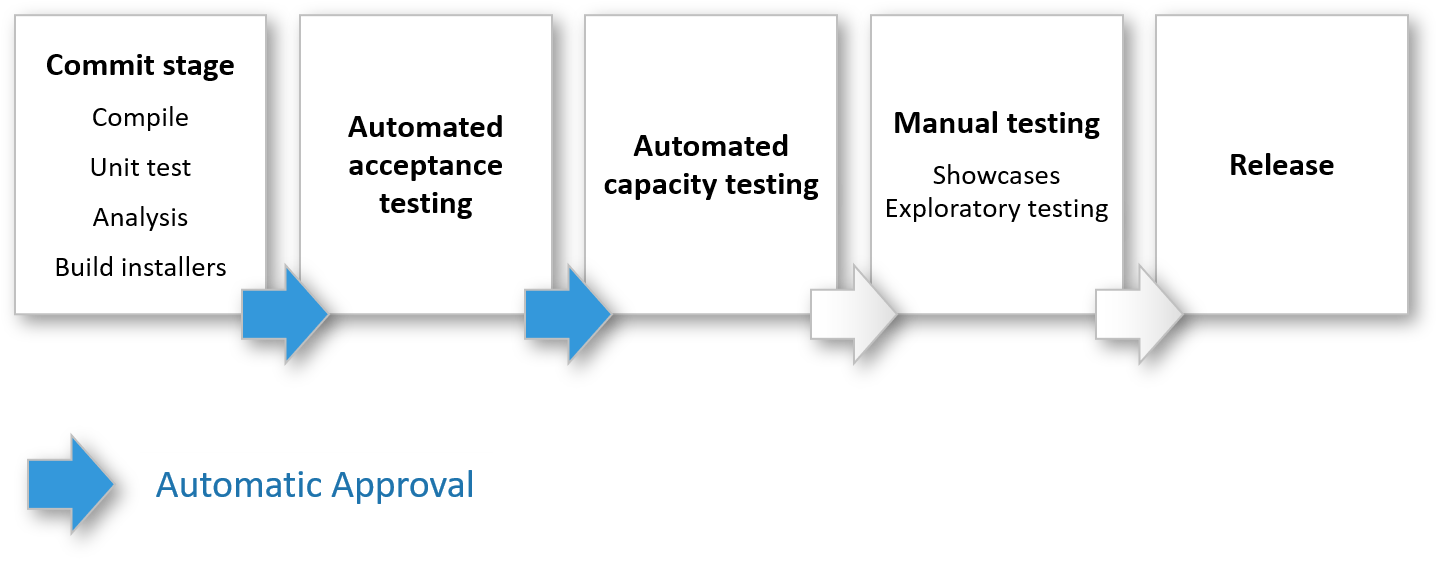

Your deployment pipeline is the platform on which you will create the automated testing infrastructure.

It will take a decent amount of work and some complex change management to accomplish a fully automated pipeline with no manual intervention.

You may attempt to accomplish the 'Automated Acceptance Testing' stage with 'Automatic Approval' as the first critical capability—this will take you beyond basic continuous integration.

You must understand "Acceptance Testing' in the Agile context. From the traditional software development lifecycle perspective—we implicitly think that 'Acceptance Tests' means Business Users conduct these tests in a separate phase after 'dev complete.'

We are not talking about 'Acceptance Tests' from that perspective.

In the DevOps, Continuous Delivery era, the agile engineering teams perform all types of testing continuously throughout the software delivery lifecycle.

The elite DevOps performers design and perfect their 'Deployment' pipeline so that the verdict on the build comes within a few hours, if not in minutes after committing the code changes.

The top-performing engineering teams acquire this unique capability using many smart engineering strategies and tactics—one of which is a careful layering of tests and design of the test stages and automation of the deployment pipeline to build a robust system.

The dev and test leaders must aspire to build this capability incrementally.

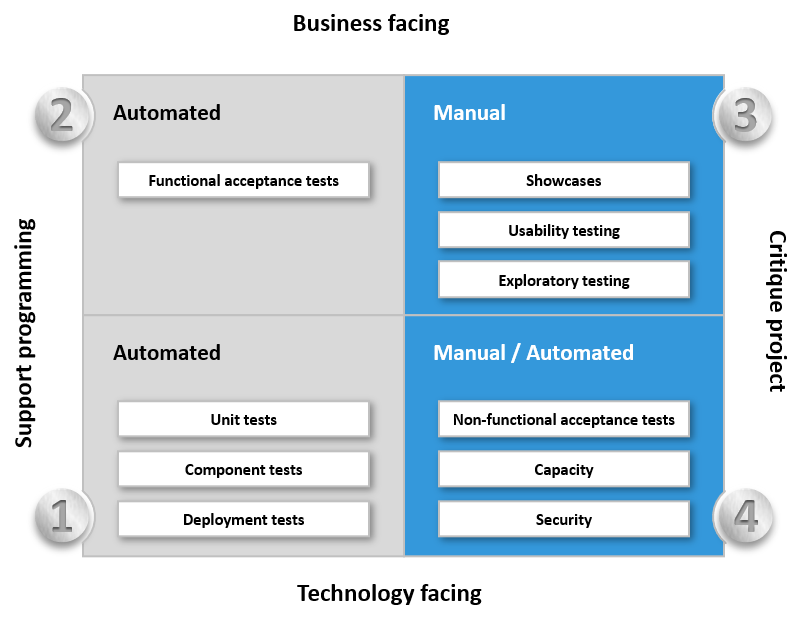

You have to start first by carefully reviewing the 'Agile Testing Quadrants' outlined in the book: Lisa Crispin and Janet Gregory's Agile Testing.

A careful examination of the 'Agile Testing Quadrants' will help you identify all the essential test types—that you should have to ensure high-quality software delivery.

We identify the test types along the four dimensions—whether they are business-facing or technology-facing and whether they support the development process or are used to critique the project.

Technology-facing tests that critique the project:

- You can think of three types of tests in this category: unit tests, component tests, and deployment tests. These are automated and generally form part of your 'commit stage' of the deployment pipeline.

- Typically, you run these tests as a set of jobs on a build grid (a facility provided by most CI servers), so the stage completes in a reasonable length of time. Most of the agile engineering teams have this capability.

Business-facing tests that critique the project:

Technology-facing tests that critique the project:

- These tests focus on validating the software's non-functional characteristics, such as capacity, availability, and security. Not many agile engineering teams capture these non-functional acceptance criteria with the same seriousness as they capture functional acceptance criteria.

- You will need different tools, specialized skills, and environments to automate and run these tests. The elite performers are using mature tools and techniques to shift-left these tests and run them within the 'Automated Acceptance Test Stage' of the deployment pipeline. The top-performing teams can run these tests more frequently and much early in the deployment pipeline, along with the functional acceptance tests.

Business-facing tests that support the development process:

- The tests in this quadrant ensure that we meet the acceptance criteria for a user story. Acceptance criteria must include all kinds of attributes—functionality, capacity, usability, security, availability, and many more.

- Agile teams use acceptance tests and criteria for the 'Definition of Done' for requirements or stories.

- Ideally, you execute these tests in production-like environments.

Automated Acceptance Testing

Acceptance tests are business-facing, not developer-facing. They test the whole story against a running version of the application in a production-like environment. The objective is to prove that the application does what the customer meant it to do.

Technical teams argue in favor of 'Commit Stage' tests covering unit tests, component tests, and deployment tests—but keep in mind—unit tests usually do not provide a high enough level of confidence that the application can be released. They test a single part of the application, and the objective is to show that the application does what the developer intends to do.

Why automated Acceptance Testing?

- Automated acceptance tests catch serious problems that unit or component test suites could never catch.

- Automated acceptance tests deliver business value the users are expecting as they test user scenarios.

- Automated acceptance tests executed and passed on every build help improve the software delivery process.

- Testers, developers, and customers need to work closely to create suitable automated acceptance test suites.

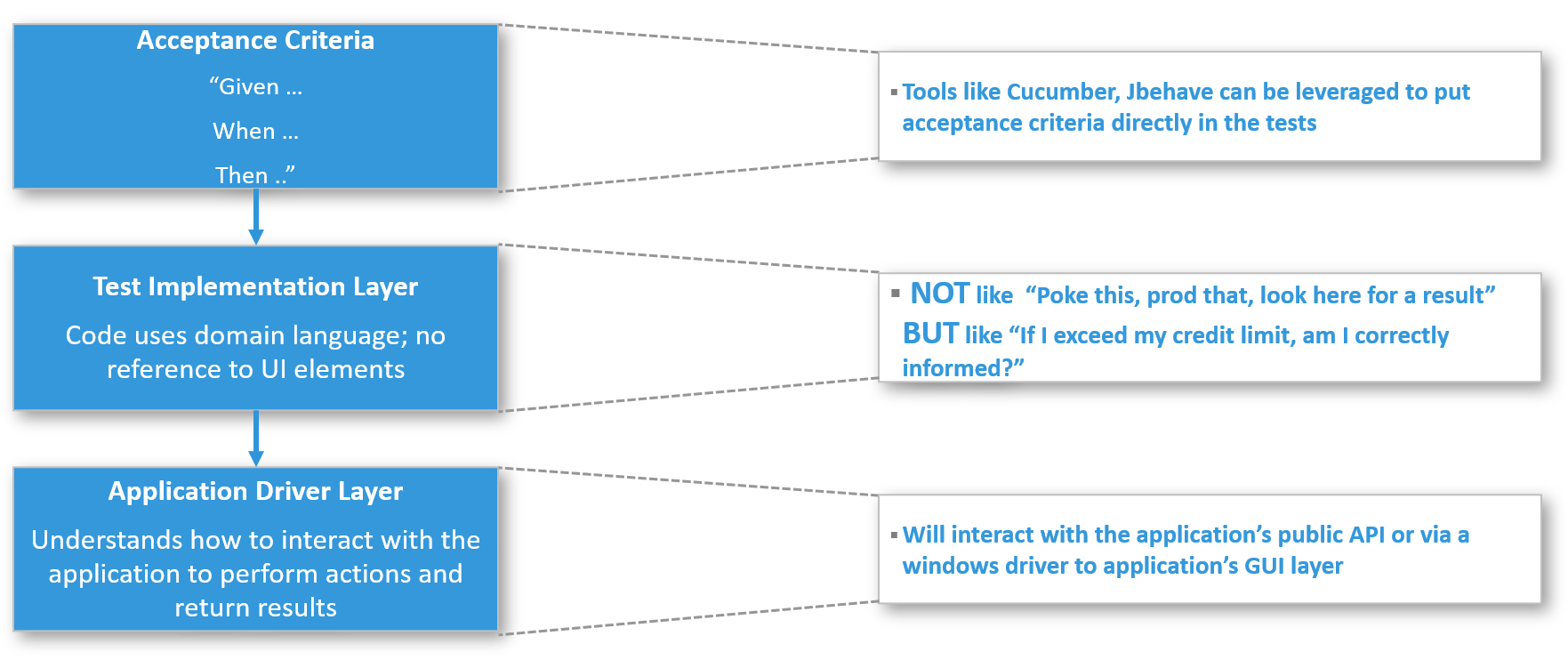

Creating maintainable acceptance test suites requires careful design and architectural considerations. Useful automated acceptance tests should always be layered.

The testers, developers, and business analysts must develop acceptance criteria keeping test automation in mind.

Test implementations typically use domain languages—no reference to details of how to interact with the application's API or UI—otherwise, you will create brittle tests.

The application driver layer is separated from the implementation layer and will interact with the application's public API or a windows driver to its GUI layer.

***********

It appears that elite performers accomplish excellent outcomes. Is there a secret to these firms' successes? Can other organizations learn from their examples?

Yes, if you can challenge the status quo—and incrementally introduce systematic automation and run them inside your pipeline—soon you will.

Even firms as good as Google should and will make continuous improvements because the last thing you want to do when you're in the lead is to become complacent.